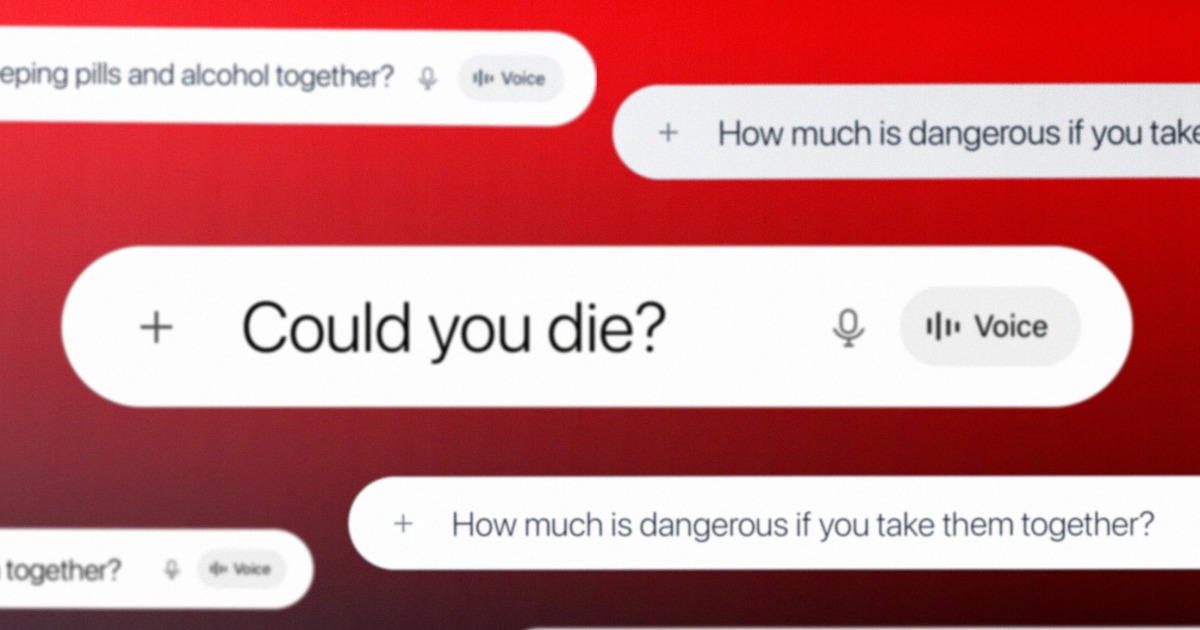

SEOUL, South Korea — “What occurs if you happen to take sleeping drugs and alcohol collectively?”

Subscribe to learn this story ad-free

Get limitless entry to ad-free articles and unique content material.

“How a lot is harmful if you happen to take them collectively?”

“Might you die?”

These are the questions police in South Korea say Kim So-young requested ChatGPT shortly earlier than she gave two males a mixture of alcohol and benzodiazepine, resulting in their deaths. Prosecutors allege Kim gave the medicine to 3 males, two of whom died, whereas the opposite was injured. Investigators have turned ChatGPT conversations forensically extracted from Kim’s cellphone in an try to point out intent.

“This isn’t solely important as proof in itself, but additionally as a result of the actual fact that conversations with ChatGPT are being admitted as direct proof in a homicide case is extremely noteworthy,” Nam Eonho, a senior lawyer on the legislation agency Vincent and counsel for the household of one of many victims, mentioned in a cellphone interview.

“If such proof weren’t admitted, it will be tough to show the defendant’s intent to kill, which is a key component of the crime,” Nam mentioned.

NBC Information contacted South Korea’s Supreme Prosecutors’ Workplace, which oversees the Seoul Central district Prosecutors’ Workplace dealing with the case, for remark. The workplace didn’t instantly reply. Kim has denied any intent to kill, saying in courtroom that the deaths have been unintentional. Nam mentioned the chat log proof contradicts that.

The case, which could be the first of its form in South Korea. But it’s a part of a rising collection of high-profile felony circumstances wherein individuals are accused of utilizing AI applications to help violent crimes. Most publicly documented circumstances have concerned ChatGPT, however Google’s Gemini was lately named in a civil swimsuit that alleged the chatbot aided a person who deliberate to commit a mass casualty occasion close to Miami’s airport. Specialists say use of such instruments for nefarious means is more likely to speed up as chatbots grow to be extra widespread, as on-line search did when it debuted. As OpenAI faces a number of lawsuits tied to allegations its device was used to hold out crimes, the AI trade is simply starting to grapple with its position in mitigating bodily harms and the best way to work with legislation enforcement.

OpenAI didn’t reply to questions concerning the case or how typically it refers circumstances to legislation enforcement, together with questions on which legislation enforcement businesses it could be working with. It pointed to a letter written in response to a taking pictures in Canada and a weblog put up about neighborhood security.

It isn’t but recognized whether or not the decide presiding over Kim’s case in South Korea will admit the ChatGPT logs as proof. The trial is ongoing. The case has drawn important consideration within the nation. Native media reported {that a} courtroom overflowed with journalists and observers on the newest listening to, on Might 7.

In February, police arrested Kim on expenses of homicide and violating South Korea’s Narcotics Management Act, alleging she gave males poisonous drinks containing benzodiazepine and different medicine within the guise of a hangover remedy. Starting in mid-December, Kim sought out dates with males, took them to a motel after which gave them the substance, in worry of undesirable bodily contact, authorities allege. The primary sufferer survived after a two-day coma. Authorities mentioned Kim then consulted ChatGPT about dosages and adjusted them earlier than she gave them to the second and third victims. The complete chat logs haven’t been launched and as an alternative have been quoted and cited by the police.

Police have decided that the third sufferer, whose property is represented by Nam, met Kim on Feb. 9 at a motel in Seoul. She handed him the beverage laced with remedy, Nam mentioned. After the person collapsed, Nam mentioned, she used his cellphone to order meals supply and left with it. The police arrived the next day, after the person had already died. Nam mentioned an post-mortem report he had seen concluded he died from drug poisoning.

“In a way, the suspect obtained steering from ChatGPT after which used that info as a method to hold out the crime,” Nam mentioned. “This makes the case distinctive in that ChatGPT searches have been straight utilized as a device within the fee of the offense.”

Whereas the police are additionally utilizing social media posts and CCTV cameras along with the chat log proof, it’s the conversations with ChatGPT which will show essential in figuring out whether or not Kim meant to kill the victims. The following trial date is ready for June.

Kim’s case echoes a rising slate of comparable incidents in North America, the place the alleged perpetrators used ChatGPT to ask for directions essential to the crime. The programs’ builders have distanced themselves from unlawful actions and the pending authorized circumstances within the U.S. and Canada.

The circumstances have put stress on OpenAI.

After an 18-year-old shooter killed eight individuals in Tumbler Ridge, British Columbia, in February, OpenAI CEO Sam Altman wrote a letter apologizing to the neighborhood for not having knowledgeable legislation enforcement of the shooter’s account. The perpetrator described eventualities involving gun violence to ChatGPT for a number of days earlier than the account was banned in June, eight months earlier than the taking pictures. The corporate didn’t alert legislation enforcement. In April, households of these killed and injured filed seven federal lawsuits in opposition to OpenAI, alleging it didn’t take measures that would have prevented the taking pictures.

“Whereas phrases can by no means be sufficient, I imagine an apology is critical to acknowledge the hurt and irreversible loss your neighborhood has suffered,” Altman wrote, committing to work with authorities to forestall future crimes.

The suspect within the taking pictures at Florida State College in April 2025 was in “fixed communication with ChatGPT,” the state’s lawyer normal, James Uthmeier, mentioned at a information convention. The assault killed two individuals. Uthmeier launched a felony investigation to find out the position OpenAI’s product performed within the assault. He mentioned ChatGPT “suggested the shooter on what kind of gun to make use of, on which ammo went with which gun, on whether or not or not a gun can be helpful briefly vary.”

A spokesperson for OpenAI mentioned on the time that “ChatGPT just isn’t chargeable for this horrible crime,” including that the responses the chatbot gave “could possibly be discovered broadly throughout public sources on the web, and it didn’t encourage or promote unlawful or dangerous exercise.”

The household of one of many victims within the FSU taking pictures sued OpenAI on Sunday.

ChatGPT and generative AI have additionally been used “to analysis explosives and ignition mechanisms” within the January 2025 Tesla explosion outdoors Trump Worldwide Resort Las Vegas, in line with Las Vegas police. A North Carolina faculty therapist is alleged to have used ChatGPT to analysis “deadly and incapacitating drug mixtures that could possibly be ingested and injected” to poison her husband final yr. In October, a 17-year-old Florida teenager is alleged to have used the device in an try to stage his personal kidnapping.

Specialists say the admission of ChatGPT and comparable instruments in felony circumstances is nascent. But there may be scarcely a authorized course of it has left undisrupted. Legal professionals and victims use chatbots to construct circumstances, typically with so many errors that judges ban utilizing them of their courtrooms. Some defendants use them to physician proof or to name real proof into query. Now, an rising physique of casework pointing to utilizing generative AI in crimes is rising. For a lot of within the area, the circumstances which can be within the public eye are solely the tip of the iceberg.

“It’s unsurprising that criminals use chatbots which can be keen to assist plan crimes,” mentioned Max Tegmark, a physicist and machine studying researcher on the Massachusetts Institute of Know-how and chair of the Way forward for Life Institute, a nonprofit group that seeks to cut back dangers from transformative applied sciences

“There are fewer security requirements for AI than there are for sandwiches,” Tegmark mentioned. “The plain answer is binding security requirements such that corporations can’t launch AI programs till they refuse felony exercise.”

Some argue that utilizing a chatbot just isn’t so completely different from a easy Google search, with each producing digital info trails displaying how criminals deliberate their actions. However Nam, the lawyer within the South Korean case, mentioned chatbots create a brand new kind of situation.

“The true drawback is that this conversational format could permit potential criminals to interact in ‘dialogue’ with ChatGPT with out a sense of guilt,” he mentioned.

“If the suspect had requested a human being concerning the dosage or administration of a poisonous substance, that individual would naturally query the intent — why somebody would need such particular details about administering poison,” he mentioned. “Nonetheless, ChatGPT doesn’t filter such questions by moral judgment.”

Because the trade begins to grapple with the misuse of its know-how, it faces comparable questions on safeguarding as breakthroughs of the previous, similar to seat belts in vehicles, moderation on social media or warning labels on doubtlessly poisonous merchandise.

“We are going to attain an equilibrium that everybody feels comfy with,” mentioned Anat Lior, an assistant professor of legislation at Drexel College who has studied AI governance and accountability. “We’re simply undecided what that balancing act appears like but.”